From my background of film SLR use and training, the above comments open a Pandora's box of questions. At the risk of boring everyone, here are the ones I've though of so far:

1) Bit Depth -- This, at least, is easily quantifiable and, if I understand correctly, tells the maximum possible dynamic range of a file (1 additional bit ideally equivalent to 1 F-stop). Right?

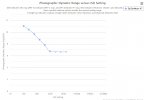

2) Dynamic Range (DR below for short) -- This depends on the sensor itself (and the electronics, processing, etc.) in some way that I don't fully understand. Clearly DR is what we really care about, but it's more difficult for the user to measure in the absence of a physical variable diaphragm. I hadn't thought about it, but I suppose the exposure can greatly affect DR, since overexposure kills the upper end of DR (sensor can't react to differences there). Similarly underexposure kills the lower end of DR. Right?

3) D-Log -- This is entirely new to me. Is it just using the electronics (or processing?) to pack more DR into the same number of bits with logarithmic scaling? If so, doesn't it dramatically vary the brightness resolution over from low to high brightness? How to you make the result look realistic in subsequent image processing?

4) ISO -- This is something I've never understood in digital cameras. (I know from experience that increasing ISO increases the noise level. In film this is because high-ISO films use larger grain size.) What's actually going on (in the electronics and processing?) that allows the same chip to appear more light-sensitive in the absence of an physical variable diaphragm?

Obviously I'm suffering from a lot of ignorance here! I come from a physics background (through college), so I ought to be teachable without too much effort. Can somebody address each of these four questions for me (albeit briefly), or at least point me to a good reference that explains them? -- jclarkw

I apologize if I have completely failed at your "briefly" request. I tried to make it understandable from a photographers point of view - which is where I have the most experience and how I came to drones.

1. Bit depth matters when the analog input is converted to digital. Higher numbers mean more steps. For example - 8 bit video can show 16.7 million colors while 10 bit can display 1.07 billion colors.

While this may sound like a lot, areas of solid color like forests, lakes and sky may have tiny differences in color and banding will show with the usual .jpg compression at lower bit depth. Which is another reason to shoot RAW as much as possible.

2. Dynamic range is the combination of sensor range and electronic post processing in a digital camera system. It ranges from where the darkest blacks merge with the noise and up to the whitest whites that saturated the sensor where no increases can be measured. Again, shooting in RAW will provide the greatest dynamic range.

3. D-log is a special curve that tries to carefully pack the exposure of your video into the broadest range so detail is not lost in the shadows and highlights aren’t overblown. The videos straight out of the camera look flat and this is on purpose so you can post process by adding saturation and contrast, etc., until you get the desired look. Also, if you are stitching videos, it’s much easier to process if you have room to increase the saturation and contrast without exceeding the limits of dynamic range in the photo/video and have the stitched parts match in color.

Some people are happy with the DJI processed video which uses a color profile that is already saturated and contrasty. If you wanted to further process that video, you may find that there’s no room to add without exceeding the dynamic range hence the reason why photographers/videographers want to shoot in the flatter color profiles.

4. Increasing the ISO doesn’t introduce any magic into the process. It merely takes the base ISO sensitivity of the sensor - which doesn’t change - and amplifies it. Just like turning up the volume on your stereo, you’re not making the source any louder, just increasing the gain.

As you increase the gain of the signal (video or audio) you also amplify the noise until the noise is as loud or louder than the signal. That’s why there are upper limits for noise free video/photos depending on the sensor and the chip processing.

Generally, larger sensors which have larger photosites can capture more photons per pixel making for a larger signal to noise ratio and thus make available higher noise free ISOs.

As sensor sizes are reduced, more in camera post processing occurs to fend off noise and loss of dynamic range and bit depth which can sometimes make pictures or videos look plasticky and fake.