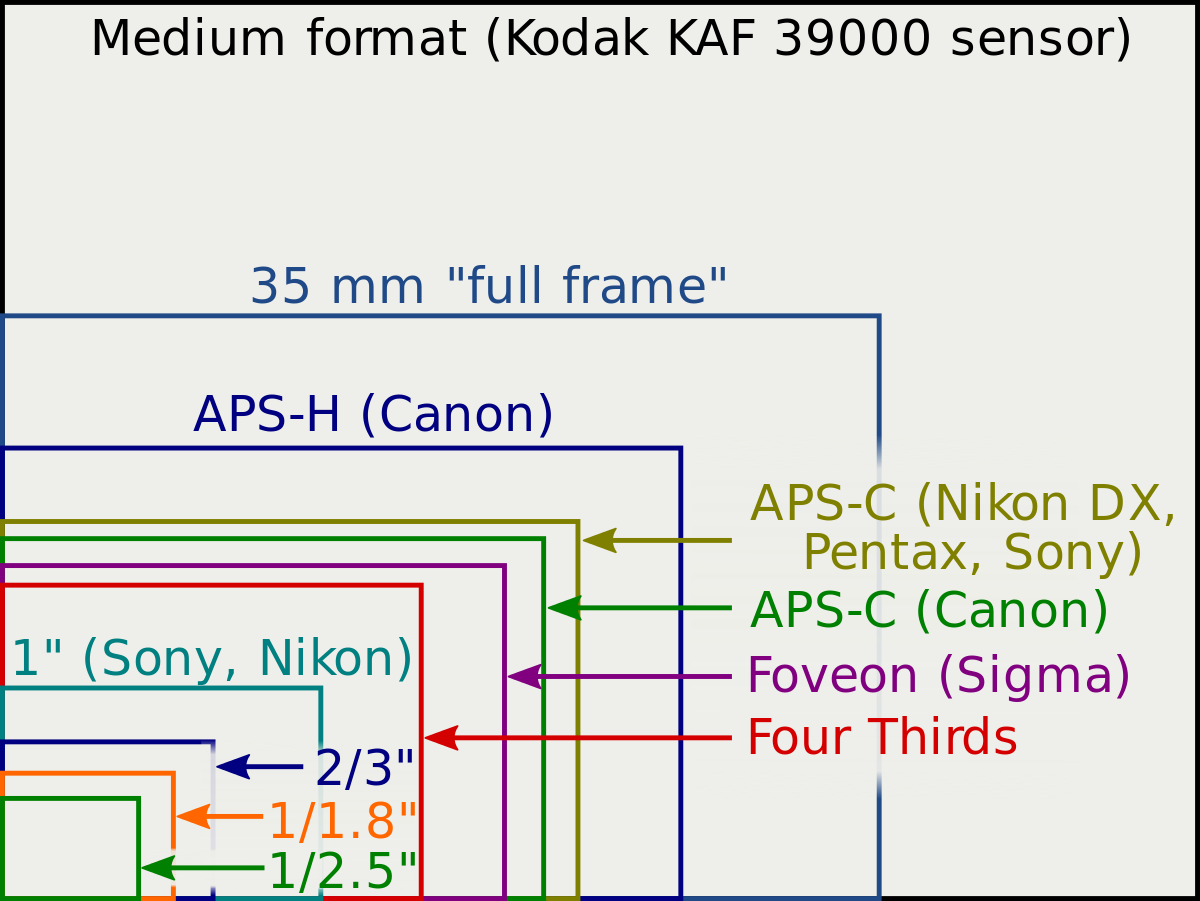

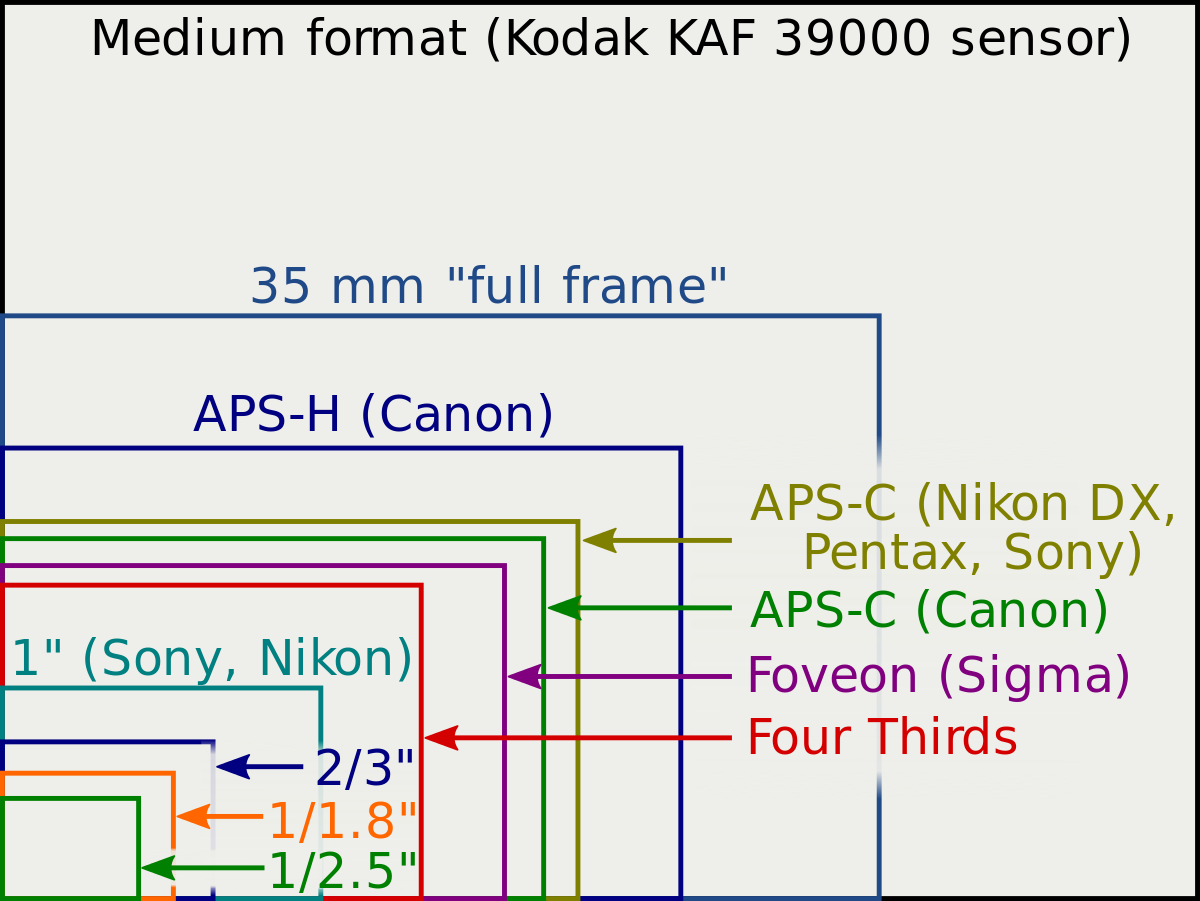

I am trying to figure out the cropfactor of the DJI Air 2s cmos sensor. They write the 35mm equivalent focal length is 22mm. A picture that I took tells me the sensor size is 5472 x 3648 pixels. Each pixel is square-shaped and with size 2.4 mu. This gives a physical size of 13.1328 mm x 8.7552mm. From pythagoras I calculate a diagonal of 15.78mm. This is not equal to 1 inch (25.4mm). Can anybody help here?

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

Cropfactor for DJI Air 2s

- Thread starter PicPerf

- Start date

anotherlab

Well-Known Member

You might want to check out an earlier thread on the sensor sizes

mavicpilots.com

mavicpilots.com

Is there a definitive source for DJI drone camera specifications?

Is there a definitive source for DJI drone camera specifications such as sensor size (in pixels) or true focal length (not the 35mm equivalent)? I know that DJI specifies the camera's sensor size using notation such as 1/1.3 but I am looking for the actual sensor size in mm for all DJI drones...

Assuming your math is correct, maximum photo/video resolution is not always equal to sensor size.

The sensor may have more photosites (not pixels) than final maximum resolution in a couple different ways:

1) The full number of photosites on the sensor may not be captured - some designed-in slop at the edges that is cropped out. This would typically be slight.

2) There may be many more photosites on the sensor than in the final image, which are processed to a lower resolution before recording.

This could happen in a couple ways:

Pixel binning, in which data from some photosites in the active imaging area of the sensor is not included;

or resolution reduction during image processing, in which e.g. a 6k image may be reduced in resolution to 4k in the camera image processing before recording.

I don’t know specifically what’s going on in the sensor and image processing of an Air2S. The engineering is interesting. In my opinion if it looks good it is good.

EDIT

My reply crossed with @anotherlab above. I re-read the earlier thread they linked, this popped out as a major third factor:

The sensor may have more photosites (not pixels) than final maximum resolution in a couple different ways:

1) The full number of photosites on the sensor may not be captured - some designed-in slop at the edges that is cropped out. This would typically be slight.

2) There may be many more photosites on the sensor than in the final image, which are processed to a lower resolution before recording.

This could happen in a couple ways:

Pixel binning, in which data from some photosites in the active imaging area of the sensor is not included;

or resolution reduction during image processing, in which e.g. a 6k image may be reduced in resolution to 4k in the camera image processing before recording.

I don’t know specifically what’s going on in the sensor and image processing of an Air2S. The engineering is interesting. In my opinion if it looks good it is good.

EDIT

My reply crossed with @anotherlab above. I re-read the earlier thread they linked, this popped out as a major third factor:

Notation for sensor size is a whole big can of worms. Basically, the published numbers are usually just a marketing gimmick based on decades old notation called 'optical format' owing back to when camera sensors were based on vacuum tubes…

Last edited:

waynorth

Well-Known Member

- Joined

- Aug 28, 2018

- Messages

- 902

- Reactions

- 991

You are not the first to be confused about the naming of sensor sizes. A 1-inch sensor is not 1 inch in diagonal, as you found out.I am trying to figure out the cropfactor of the DJI Air 2s cmos sensor. They write the 35mm equivalent focal length is 22mm. A picture that I took tells me the sensor size is 5472 x 3648 pixels. Each pixel is square-shaped and with size 2.4 mu. This gives a physical size of 13.1328 mm x 8.7552mm. From pythagoras I calculate a diagonal of 15.78mm. This is not equal to 1 inch (25.4mm). Can anybody help here?

Here is the explanation why:

Image sensor format - Wikipedia

That’s a great resource @waynorth , I myself hadn’t seen it.You are not the first to be confused about the naming of sensor sizes. A 1-inch sensor is not 1 inch in diagonal, as you found out.

Here is the explanation why:

Image sensor format - Wikipedia

en.wikipedia.org

For those wading through the lengthy page, the marketing definition of “1” sensor” starts here (link), well down the page.

Similar threads

- Replies

- 21

- Views

- 17K

- Replies

- 11

- Views

- 11K

- Replies

- 21

- Views

- 12K

DJI Drone Deals

New Threads

-

-

-

-

-

The FCC ban on all foreign made UAS and UAS "critical components"

- Started by anotherlab

- Replies: 15