We have to start with the definition of dynamic range which is a tricky subject. Dynamic range generally refers to both the contrast ratio, difference between the darkest tones and brightest tones in an image (luminance) and also the number of levels or “stops” between black and white.

Electronic screens are interesting in this regard because they use light producing pixels to represent scenes that are actually the result of light that was reflected from the scene they are representing. For example the contrast ratio of a printed image can at maximum be the difference from a black pigment that reflects no light to the amount of light the blank white paper can reflect. However, a screen is a light producing medium so the possible contrast ratio is almost infinite ranging from the same black pigment that reflects no light all the way up to the maximum number of photons that could occupy the space of a single pixel (many times brighter than the sun.)

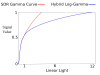

HDR video standards such as HLG, HDR10, Dolby Vision etc. use the contrast ratio to claim “HDR“ by using screens that produce brighter and darker tones (luminance) than a traditional screen. Of note however is that the number of levels (stops) between the brightest and darkest parts of the screen remain relatively unchanged. This is because the tones it produces above 50% brightness (luminance) is on a logarithmic scale.

View attachment 106150

There are a issue in the digital video industry right now. One is that there is no standardized meaning to HDR and there are competing standards that aren’t compatible with each other and not all screens are compatible with each of the standards. Reminds me of the Blu-ray vs HD DVD. Hopefully one will win out so we can all use the same standard. Next the maximum brightness a TV can produce even when complying with one of the standards can vary wildly so “HDR” on one screen will look totally different on another screen maybe even worse than SDR. Dolby Vision tries to address this by allowing colorists to encode multiple different color grades corresponding to different maximum brightness levels on different screens into the same video which will play back on with the color grade meant or each tv based on its maximum brightness. However this means to use Dolby Vision you have to color grade and color correct multiple times for the same video which is a nightmare. Last and not least is that not everybody has HDR screens so the media is going to look wildly different depending on the screen the audience is watching on.

DJI’s use of “HDR” is closer to the definition used for in digital photography which is to use multiple exposures of the same image and combine them to fit the dynamic range of the image into the SDR gamut increasing the number of levels (stops) between the brightest part of the image and the darkest part of image far beyond what would be possible with a single exposure. The contrast ratio remains the same but this means that no special hardware is required and it will look the same on all screens. This is enabled by DJI’s inclusion of the quad bayer sensor on the

MA2 which can divide up the sensor and take different exposures simultaneously on the same sensor. If this is better or worse in theory than than using contrast ratio like the HDR video standards is largely a matter of opinion and in practice it is highly dependent on the screen you are watching on and how the media is color graded. For the vast majority of screens currently in use the way DJI is doing it is likely going to look superior.

It will be interesting to see how HDR video moves forward because I imagine that the popular HDR video standards were created with the assumption it wasn’t possible to get multiple exposures in video. As we can see that is no longer the case. Will HDR TVs go the way of the 3D tv and die or will the next developments be toward combining the benefits of multiple exposures AND increased contrast ratio.

We haven’t even gotten into color yet but I’ve taken way too much time on this